Hybrid Data Governance: Tokenization, Masking, and NER for Structured & Unstructured Data

Executive Summary

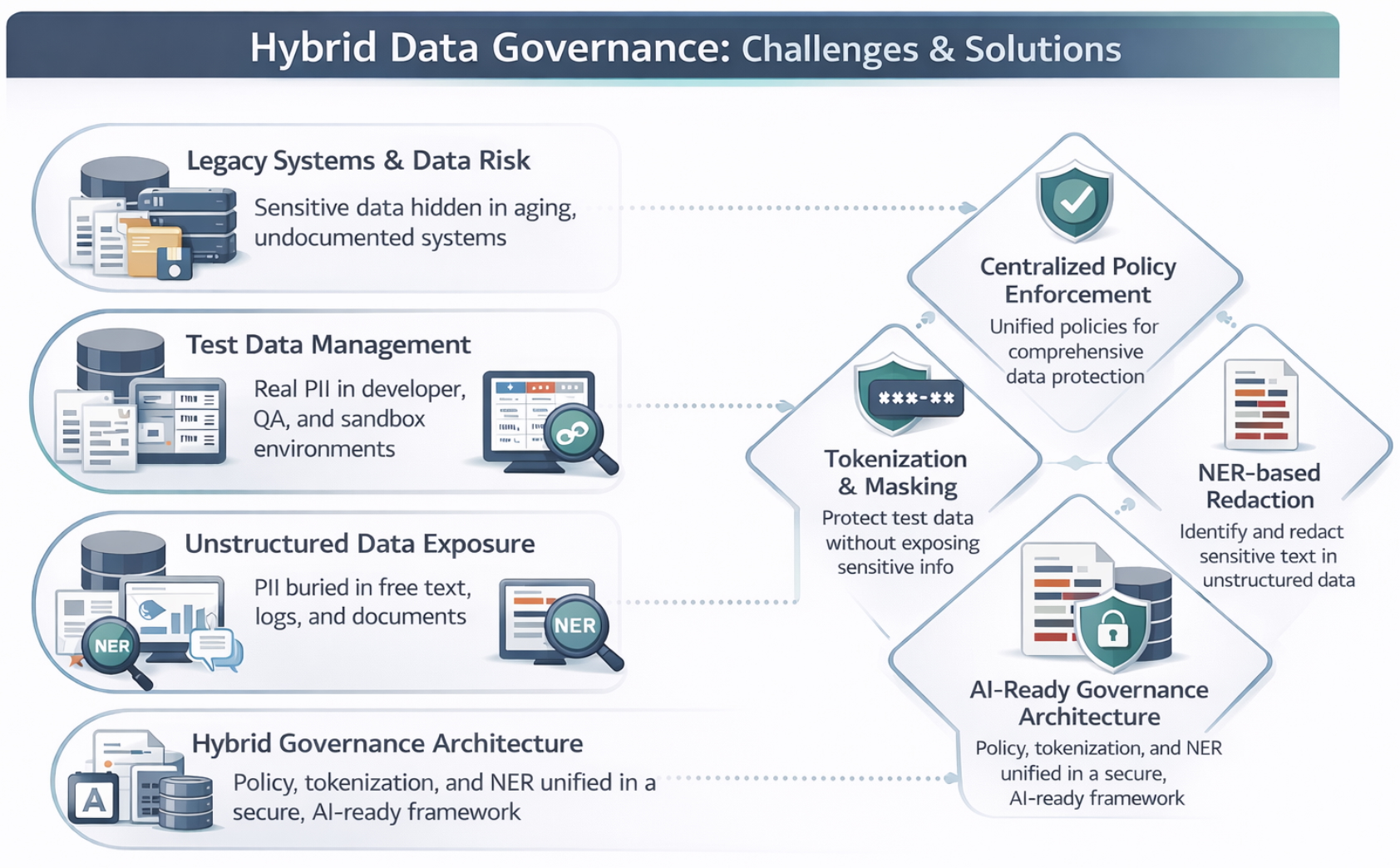

Modern enterprises manage a staggering amount of sensitive data hidden within unstructured notes, emails, and logs, alongside traditional structured databases. If left ungoverned, this data exposes the organization to severe regulatory fines, reputational damage, and operational failure in the event of a compromise. Furthermore, as organizations begin to scale AI/ML initiatives, legacy systems with disparate data sources significantly elevate the risk of leaking sensitive information into external ecosystems.

To mitigate these risks, this article demonstrates a high-impact, hybrid data governance framework using a Conceptual Proof of Concept (PoC). By integrating Tokenization, Masking, and Named Entity Recognition (NER), this approach ensures that sensitive information is protected both at-rest and in-transit. This strategy not only satisfies stringent compliance requirements but also enforces "need-to-know" access, providing a secure foundation for advanced analytics and AI initiatives to scale securely.

Introduction

Data is the backbone of modern enterprise innovation. AI, analytics, and automation rely on vast amount of data to deliver actionable insights that drive competitive advantage. However, sensitive information often resides in both structured fields—such as Social Security numbers, and credit card details—and unstructured text, including customer comments, system logs, and support notes.

In a landscape where data is a premier strategic asset, robust security is a prerequisite for maintaining compliance with stringent regulations like GDPR, HIPAA, PCI DSS, to name a few. The presence of legacy systems further complicates this mission, creating significant barriers to securing Personally Identifiable Information (PII).

This article demonstrates a hybrid governance framework that combines Tokenization, Masking, and NER-driven Redaction to provide comprehensive protection while ensuring data remains a high-utility asset for the organization.

This PoC demonstrates the core enforcement logic used in enterprise governance systems. In production, policies are externalized and enforced by centralized services, but the underlying decision model remains the same.

This PoC uses spaCy’s large NER model to demonstrate unstructured data governance. In production environments, enterprises typically deploy larger or transformer-based models—often fine-tuned for domain-specific PII—to maximize recall and regulatory confidence.

Challenges in Modern Data Management

Implementing modern data management isn't without its obstacles. Here are a few key challenges enterprises face today:

The Legacy System Dilemma

Legacy applications remain prevalent even in today's digitally advanced economy. Managing data security at-rest, in-transit, and during the migration from legacy systems to the cloud poses significant risks. If sensitive information is not properly secured within these older environments, it becomes a critical bottleneck for modernization and a primary vector for breaches.

Solving the "Test Data Gap" - Test Data Management (TDM)

One of the most persistent bottlenecks in the software development lifecycle is the availability of high-fidelity test data. Developers often find themselves in a "catch-22": using synthetic data that fails to capture real-world edge cases, or waiting weeks for security clearance to access production samples.

By applying this governance logic at the point of data extraction, a self-service pipeline can be created that transforms a subset of production data into a "safe-to-share" version. This ensures that lower environments remain compliant with regulatory requirements (like GDPR or HIPAA) without sacrificing the realism required for rigorous debugging and performance testing.

Unstructured data remains the largest ungoverned risk surface in the enterprise

Traditional governance tools typically focus on structured database columns, leaving sensitive PII—such as names, locations, and dates of sensitive life events—exposed within emails, system logs, and free-text notes. Conventional masking cannot "see" into these strings, creating a massive, ungoverned surface area for potential data compromises.

The Compliance Complexity Trap

Global regulations like GDPR, HIPAA, and PCI DSS now demand granular tracking and verifiable PII identification. Organizations failing to bridge the gap between legacy storage and modern compliance requirements face escalating legal liabilities and reputational risk, both of which have a direct, negative impact on corporate revenue.

The "Data Utility" Dilemma

Security often comes at the cost of utility. Over-redacted or poorly masked data can render it useless for AI models and analysts who require context for accuracy. The core challenge is providing governed, high-fidelity data that maintains analytical value without risking the exposure of individual identities.

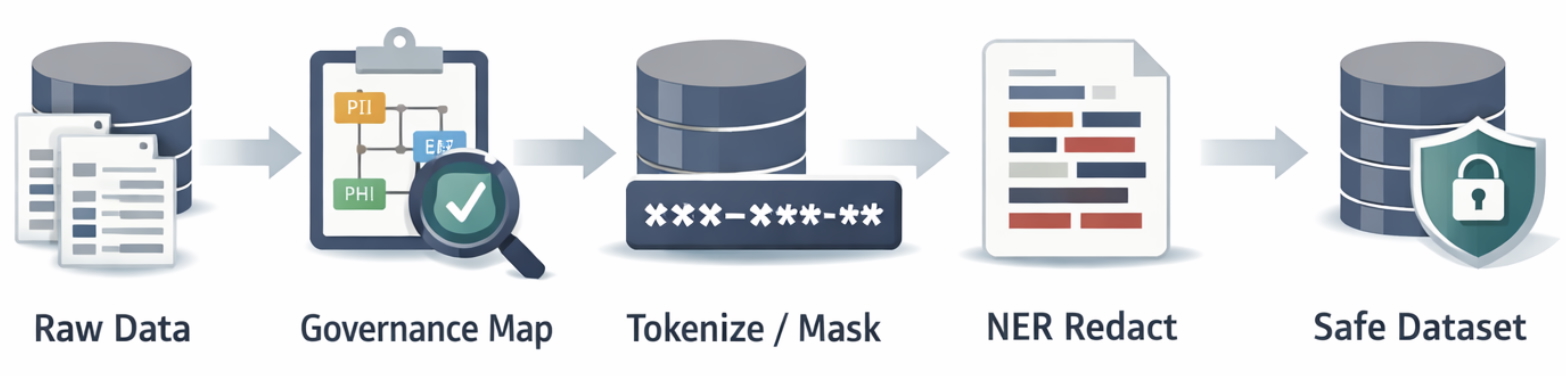

Practical Hybrid Solution: The Governance Framework

This solution integrates structured and unstructured data governance into a single, unified pipeline, ensuring that every data point—regardless of its format—is subjected to the appropriate security control.

The Governance Map

The Governance Map acts as the "Brain" of the pipeline, defining sensitivity levels and the required transformation action for every data attribute.

| AI Adoption Growth By Sectors | |||

|---|---|---|---|

| Action | Technical Description | Business Value | |

| TOKENIZE | Uses sha256 hashing to ensure the same input always generates the same hash value, providing secure, consistent tokenization. | Maintains referential integrity for analytics without exposing PII, while ensuring consistent mapping of sensitive data. | |

| PARTIAL MASK | Applies format-preserving masks to maintain data utility (e.g., 555-****) | Allows for demographic or regional analysis while protecting identity | |

| REDACT | Utilizes NER to identify and completely remove entities from unstructured strings | Neutralizes the "Unstructured Blind Spot" in logs and notes | |

Structured Data Governance

For fixed-field data such as IDs and financial markers, we utilize Tokenization. This ensures that even if the dataset is compromised, the tokens are useless without access to the hardened Vault.

Unstructured Data Governance (NER)

To protect "free-text," the pipeline employs Named Entity Recognition (NER). Unlike static masking, NER understands context, allowing it to distinguish between a "date of birth" and a "transaction date."

Named Entity Recognition (NER) is an AI technique that automatically identifies sensitive entities—such as names, locations, and dates—inside free-text data so they can be governed consistently across the enterprise.

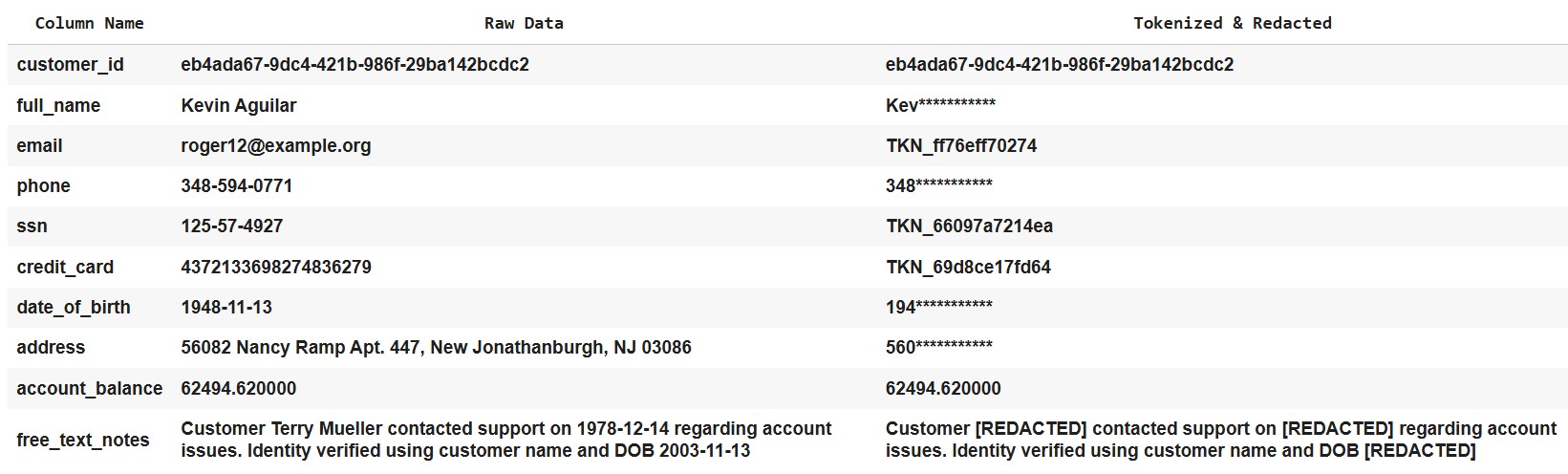

The Governed Dataset Output

The final result of this pipeline is a high-fidelity, audit-ready dataset. For example, the table below presents both the raw and tokenized/redacted data, generated using synthetic data, for visualization.

By combining these three techniques, the enterprise achieves:

- Reduced Liability: High-risk PII is removed from the general environment to minimize exposure and potential harm.

- Operational Speed: Teams no longer wait weeks for "data cleaning" approval.

- AI Readiness: Datasets are safe to be ingested by external LLMs or internal analytics models.

Strategic Impact: Why This Matters to Leadership

Implementing a hybrid governance framework is not merely a technical upgrade; it is a strategic investment in the organization's data maturity. For leadership, this approach delivers four critical business outcomes:

Proactive Risk Mitigation

Traditional security is often reactive. This modern data security framework proactively "neutralizes" data at the source. By replacing sensitive PII with tokens and redactable entities, the organization significantly reduces its blast radius in the event of a breach, ensuring that even if data is accessed, it remains unintelligible and valueless to unauthorized actors.

Automated Compliance & Governance

Global regulations—such as GDPR, HIPAA, and PCI DSS—demand more than just "safety"; they require proof of control. This solution automates the identification and protection of sensitive data across disparate legacy and modern systems, ensuring the organization remains audit-ready without manual, error-prone interventions.

Accelerated Business Enablement

The biggest bottleneck for AI and analytics is often the Data Privacy Review. By providing pre-governed, high-utility datasets, this framework removes the friction between Security, IT, and Innovation. Analysts and AI models can operate on safe data immediately, significantly reducing the Time-to-Insight for critical business decisions.

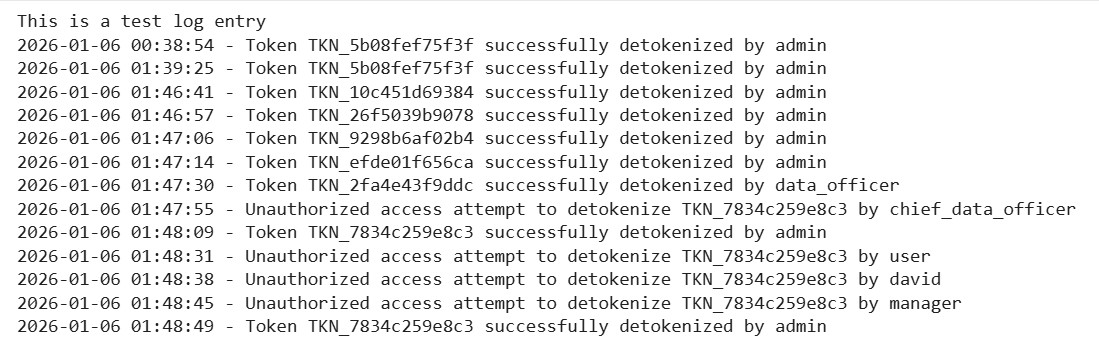

End-to-End Auditability

Transparency is a cornerstone of trust. Every tokenization and redaction action within this pipeline is traceable and logged. This provides a robust audit trail that demonstrates a "Security by Design" posture to regulators, partners, and stakeholders.

Actionable Recommendations for Implementation

To successfully transition to a hybrid governance model, organizations should prioritize the following steps:

- Deploy a Unified Hybrid Framework: Implement a single pipeline that handles both structured and unstructured data streams simultaneously. This eliminates "security silos" and ensures consistent protection across the enterprise.

- Match Technique to Data Type: Utilize Tokenization and Masking for structured database columns to maintain referential integrity, while applying NER-based redaction to neutralize sensitive entities within free-text logs and documents.

- Harden the Governance Vault: Maintain a secure, access-controlled token vault. Ensure all tokenization and detokenization events are captured in tamper-proof audit logs to satisfy global compliance requirements (e.g., GDPR, HIPAA, PCI DSS).

- Operationalize for Innovation: Integrate these governance pipelines directly with your AI, ML, and BI platforms. This allows your teams to build models on safe, high-utility data without waiting for manual privacy approvals.

- Cultivate a Privacy-First Culture: Train cross-functional teams on the technical governance framework and broader data privacy best practices. Technology is only as effective as the policy and people supporting it.

The Bottom Line: Security Without Sacrifice

Sensitive data is no longer confined to neat database rows; it is scattered across logs, chats, and documents. A layered, hybrid approach—combining Tokenization, Masking, and NER—allows the modern enterprise to:

- Neutralize Risk: Protect PII at the source, reducing the impact of potential breaches.

- Automate Compliance: Meet the rigorous standards of GDPR, HIPAA, and PCI DSS.

- Fuel Innovation: Unlock safe, high-utility data for AI and advanced analytics.

- Ensure Accountability: Maintain a transparent, tamper-proof audit trail.

This framework bridges the gap between high-level security strategy and technical execution, providing a resilient foundation for the data-driven future.

In advanced environments, policy-based controls are often augmented with AI-assisted discovery models to surface hidden sensitive data in poorly labeled legacy systems—a topic explored in a follow-up article.

#CIO #CTO #CDO #DataGovernance #AIGovernance #DataPrivacy #RiskManagement #EnterpriseAI